We have discussed maturity and tools, but how do we quantify the results? In the high-stakes world of AI risk evaluation, vague qualitative statements like “the model looks safe” are insufficient. We need hard numbers and verifiable evidence. This brings us to the critical concepts of ai audit assessment scoring and the ai audit assessment citation.

For Chief Risk Officers and Compliance leads, these two elements are the bedrock of defensibility. If a regulator comes knocking, your ai audit assessment scoring provides the quantitative proof of safety, while your citations provide the trail of evidence.

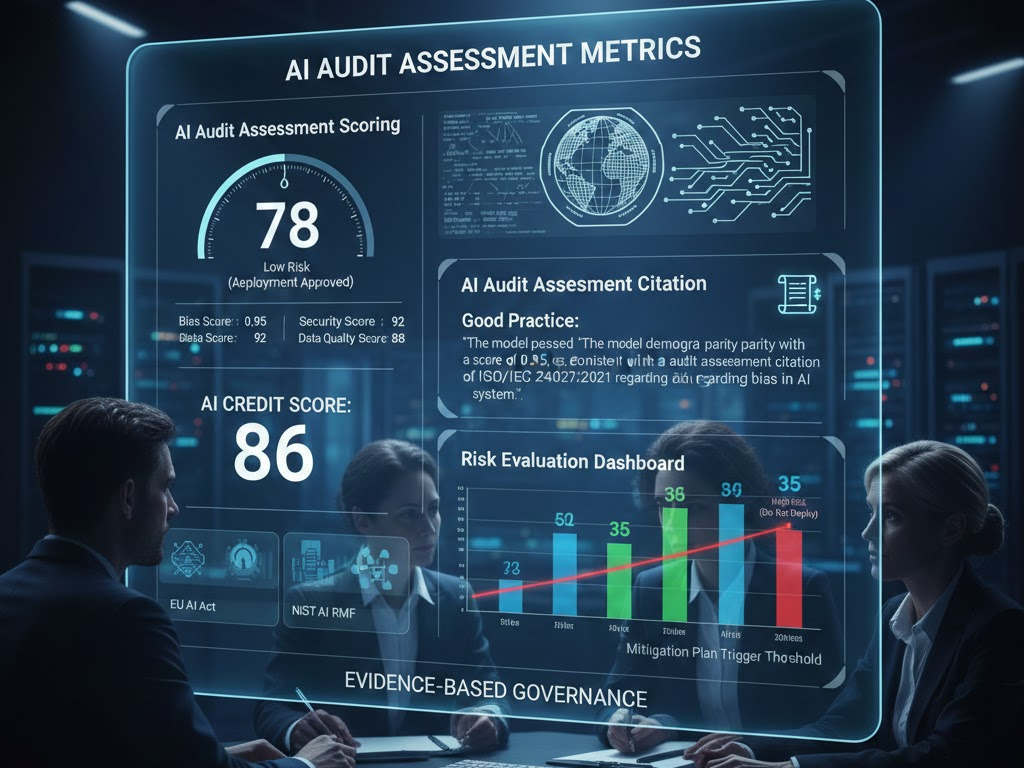

The Science of AI Audit Assessment Scoring Ai audit assessment scoring is the process of assigning numerical values to risk categories. This allows for the normalization of risk across different business units. For example, a bank might use a scoring system of 0 to 100, where:

0-40 High Risk (Do not deploy).

41-70 Medium Risk (Deploy with human oversight).

71-100 Low Risk (Automated deployment approved).

But what goes into an ai audit assessment scoring model? It typically aggregates scores from various sub-vectors:

- • Bias Score: Derived from the ai assessment tool metrics (e.g., Disparate Impact Ratio).

- • Security Score: Based on vulnerability penetration testing.

- • Data Quality Score: Based on the completeness and lineage of training data.

The aggregate provides a “Credit Score” for your AI. This scoring system is essential for “Risk Evaluation.” It allows leadership to view a dashboard and instantly see which models are dragging down the organisation’s overall safety profile.

The Importance of AI Audit Assessment Citation

While scores give you the “what,” citations give you the “why.” An ai audit assessment citation is a reference to the specific standard, law, or test used to justify a decision. In a professional ai audit assessment, you cannot simply claim a model is robust. You must cite the methodology.

• Bad Practice: “The model is fair.”

• Good Practice: “The model passed the demographic parity test with a score of 0.95, consistent with the ai audit assessment citation of ISO/IEC 24027:2021 regarding bias in AI systems.”

The ai audit assessment citation links your internal tests to external validity. It proves that you are not grading your own homework but are adhering to recognised global standards (like the EU AI Act or NIST AI Risk Management Framework). Maintaining a library of these citations is a hallmark of a mature risk evaluation process.

Connecting Scoring to Business Decisions

The ultimate goal of ai audit assessment scoring is to facilitate decision-making. A risk evaluation framework should dictate that if a model’s ai audit assessment scoring drops below a certain threshold, a mitigation plan is automatically triggered. This removes emotion and bias from the approval process. It ensures that a highly profitable but ethically dangerous model is not pushed through simply because a manager likes it. The score is the arbiter.

Conclusion

Evidence-Based Governance Risk evaluation is not a feeling; it is a calculation. By implementing a rigorous ai audit assessment scoring system and backing every claim with a valid ai audit assessment citation, organisations build a shield of compliance. This evidence-based approach is the only way to navigate the complex regulatory waters ahead, ensuring that your ai audit assessment stands up to scrutiny in any jurisdiction